Talking with Amith Nair

Around the time of KubeCon & CloudNativeCon Virtual 2020 the cloud report had the opportunity to talk with Amith Nair, Vice President Product Marketing at HashiCorp, about automation, what a company can do for cloud-native development and get to the heart of open source.

Hi, great to meet you! Please, introduce yourself, what are you doing? What is HashiCorp doing?

Hi, thank you very much. I´m Amith Nair and I run the product marketing organization for HashiCorp. I’m excited to be here, this is a great opportunity for us to display how HashiCorp products integrate with all the different options out there, especially from a Kubernetes standpoint.

HashiCorp is built on the belief that there is a fundamental change and shift happening in the market – from a static cloud environment to a public cloud environment. But in that transition there is a lot happening that is not what most folks in IT organizations are traditionally used to because of the influx of DevOps.

Our products have a consistent workflow mechanism that helps bridge the gap between the old static world and new dynamic environment across different layers of the cloud operating model.

At the infrastructure layer we have a product called Terraform which, like all HashiCorp products, is available both as open source as well as commercially. Our security offering is a product called Vault, which again, is available through open source and commercially. The networking layer solution is called Consul and the runtime layer solution is Nomad.

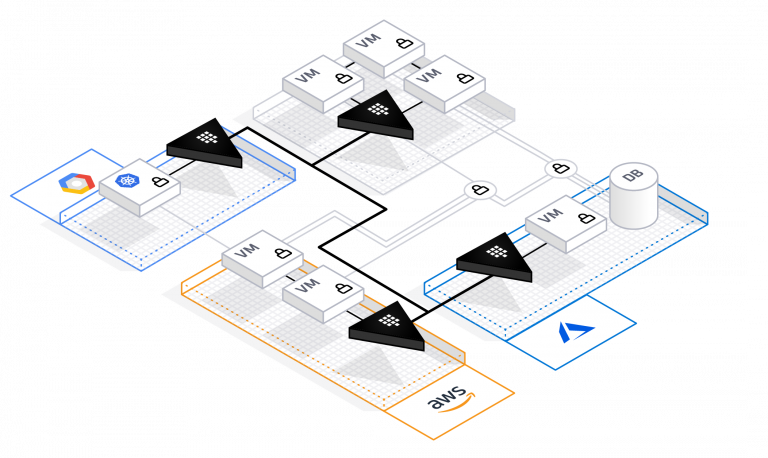

All of these solutions integrate with Kubernetes. We work on a consistent platform in terms of enabling the ecosystem to work together. At the end of the day our goal is to help accelerate the deployment of applications to any cloud while also working with any existing infrastructure such as a private cloud or datacenter – making sure that the old world plays really well with the new one.

That sounds great. To go into more detail, recently you published the news that you are releasing multi-cloud infrastructure automation. Please, tell us something about that.

The first step in transitioning to the cloud, as we learned from talking to customers, developers and practitioners, is about enabling the infrastructure. You need a platform where you can actually deploy the applications. The overall journey is defined by the parameters that we call the Cloud Operating Model which highlights all the different aspects needed.

You have to start with infrastructure at the base, then add a layer of security, followed by networking components and then run-time orchestration. Multi-cloud infrastructure automation really takes into account all of these different components whereby we enable the app developer, the operations folks who are involved with cloud and DevOps.

To ensure this happens seamlessly we make sure there is a consistent workflow that is also self-service. From a developer standpoint, if they are trying to enable infrastructure in the cloud, they should be able to take pre-existing templates or pre-defined, pre-approved templates across all of these different categories or groups within an organization and deploy almost seamlessly and automatically.

That’s pretty interesting. I have a background as a developer. So, for me the question is obvious: Is there a strategy for HashiCorp to integrate the developer tools even better? Multi-cloud automation is something that I would see as a developer being integrated in my favorite IDE as much as possible, because I´m not able to handle that perhaps, but HashiCorp, specifically Terraform, can do that, right?

Yes. Terraform today has over 1200 providers across multiple integration points. I would say one of the strengths that Terraform has is the integration into the ecosystem to enable that automation.

One of the four pillars for Terraform – and of all HashiCorp products – is the automation aspect, which is why we believe strongly that creating a consistent workflow is extremely important.

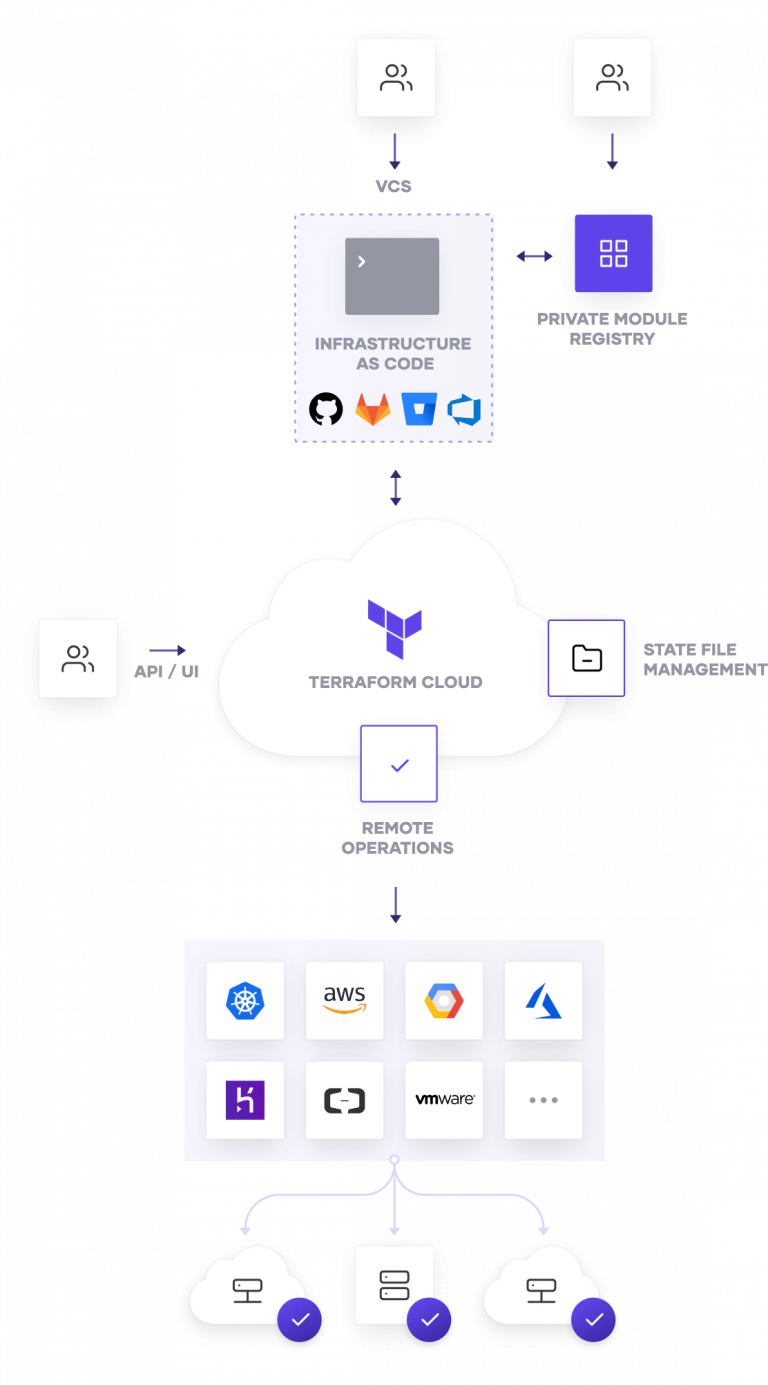

What Terraform does really depends on who you use in your infrastructure such as a firewall provider, GitLab or GitHub or any other cloud provider (fig.1).

Terraform has providers for each of these applications or products that tie to the automation aspect of Terraform to create a single workflow in a template. That is definitely one of the key benefits of using Terraform. As a developer I think that is one of the key aspects that they derive from Terraform and all of our tools is that because it is now pre-approved by the ops team to say, ‘if you are deploying an application that looks like this, then these are the set of approved infrastructure components that you need, with no need for reconfiguration’.

Additionally, it is a self-service model at that point, where the developer can say that because it’s available from the ops team, they are going to make it part of infrastructure as code for Terraform and deploy it. It makes the transition and operational aspects simpler, easier and seamless, and it’s the automation aspect which drives the benefit of everything we do.

Fig. 1: Dynamic infrastructure with Terraform

The kind of automation aspect of this is really interesting for me because I don’t like waiting for things for example. So, looking at cloud environments, specifically more complex cloud environments, it takes a lot of time until all the automated steps are executed. Can you tell us a little bit about the ability of Terraform to execute multiple jobs at the same time, because I feel like that is another very interesting aspect?

It is an extremely important aspect, and we have some large complex customers that specifically would request concurrent runs. In addition to our on-premise solution Terraform Enterprise, we recently announced a SaaS solution – Terraform Cloud (fig. 2).

The initial thinking behind Terraform Cloud was primarily as a free tier for some practitioners who could use up to 4 users, single run only and then we expanded that to include teams and governments.

So, the traditional road velocity or the trajectory that we have seen with customers is that as more developers or practitioners start to use Terraform there comes a point where you want to collaborate, you want to include some governance and compliance on top of it.

From a Terraform Cloud perspective the next tier up was governance and compliance, but then as we started making that available, we were surprised at the number of requests we were getting from large enterprises, who wanted to use Terraform Cloud and their number one request was confirmed runs or Applies. This is now available and instead of sequencing these Applies you can concurrently run multiple Applies especially for large teams.

But really that aspect of being able to work with different colleagues and making the DevOps process faster is key.

You mentioned the time that it typically takes from a developer perspective to see actual traffic to the application can be really long. Previously we’ve worked with a telecommunications customer who told us that from the time the developer writes the application it takes them about 22 weeks before that application sees traffic. And that it takes over 70 steps in order to get there.

This is because the developer writes the application, submits a request or ticket to the ops team which has to reconcile the fact that there are a lot of infrastructure applications and decide on what aspects need to be considered. For example, is it going into a container? What cloud is it going into? What else needs to be done from an infrastructure standpoint?

Once approved it then goes to the security team. The security team is looking at things like: Do I need secrets management on this? What kind of rule-based access control do I put in? What authentication mechanism do I have in the background? That all takes time.

Then it goes to the networking team. The networking team has to identify the right firewall rules and open up the right ports. By that time this has all been done, the developer is already developing their next application, before the previous one has seen any traffic.

That’s why the collection of tools becomes so important and easy. For example, let’s say a developer is developing a mobile application. Now they can have a pre-approved template from ops which says ‘if you meet these standards then just use this template’.

Same goes for the security team, that says: if this application that has these parameters, here is a pre-approved template that gives you the access to write secrets management systems, the authentication mechanisms and so on. It’s then the same process for the network team.

So, what would typically take 22 weeks for this telecom operator dropped down to under five minutes. That is a really significant impact and demonstrates the true value of the application at speed and at scale our customers are realizing.

Fig. 2: Terraform Cloud – how it works

Just that example definitely proves where the value of HashiCorp products, specifically Terraform and the new business package actually lies. But looking a little bit more over the fence, you mentioned containers and Kubernetes, what is your standpoint towards Kubernetes and containers and how do you as HashiCorp support and integrate with them?

Across all of our products we have integrations into Kubernetes and containers. Eventually our goal is to make sure our customers – whether they are coming from an open source or enterprise perspective – have the flexibility to deploy the applications where they want. We are the enabler to make that happen and give them freedom of choice.

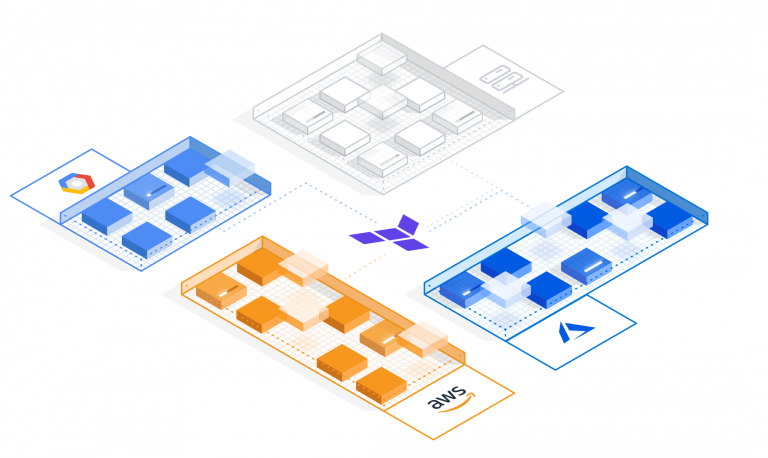

This is not just from the container aspect, but also from a cloud perspective, so you can be Kubernetes in Azure, AWS, GCP or Oracle Cloud (fig. 3). There shouldn’t be restrictions based on any kind of application sets. That’s our goal.

To that point we have Kubernetes and container integration to all of our products. For example, with Vault we announced helm charts integration last year. There have been multiple changes since then and a lot of announcements as a result. Terraform also has integrations, as does Consul.

Nomad’s core focus is specifically as a container orchestration solution and it is getting a lot of attention from an open source download perspective. A big benefit of Nomad is that it works both concurrently with, and independently off, Kubernetes. It also works with legacy applications. So those people who are using Java applications or .net applications can orchestrate while letting Kubernetes run on the cloud-native applications. Nomad can also do both.

There are consistent integrations and continuous development happening across all our products in terms of Kubernetes and container integrations. That’s the short answer!

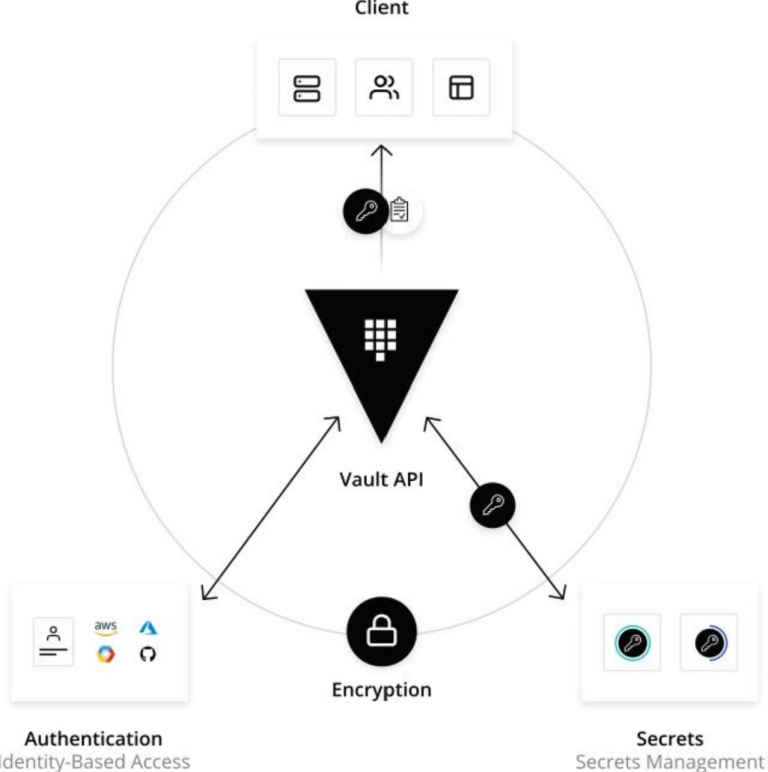

Fig. 3: Dynamic infrastructure with Vault

That was the short answer? Good to know! What is your long-term vision at HashiCorp? Where is it directing, going to? What is the next big thing that is going to be addressed with HashiCorp´s products?

In terms of pure product announcements, we will have a few at HashiConf US digital (October 2020), which is our big event this year. From a pure product perspective, each product is going through its own maturity growth.

Terraform and Vault are the most mature in terms of where the product set is as they have been in the market for a long time. Terraform in many ways is the de facto standard when it comes to infrastructure provisioning. So really, for Terraform it´s improving the self-service capability, adding more automation capabilities in terms of creating templates and so on.

For Vault it is advancing beyond secrets management. We already announced as part of the last releases 1.4 and 1.5 of Vault things like advanced data protection, tokenization and K-map support. All of these things really help go beyond secrets management, adding more crypto layers, enabling static data encryption as well as dynamic encryption of data in motion and so on (fig.4).

For Consul it is really about evolving through the service mesh journey. Consul really started off purely by doing service discovery and registrations, so the core premise for Consul was to discover all the micro-services on a network because when most people talk about service mesh, they don´t realize that discovery is the biggest problem. Once you have that discovered then you can add the Layer 7 capabilities and the L4 intentions and so on. Consul has gone through significant evolutions in terms of it´s roadmap. Consul 1.8, which was announced a few months ago, has advanced service mesh capabilities, advanced connectivity and federation and so on.

For Nomad, again we´ve really helped with that orchestration aspect. But how do we extend beyond orchestration? How do we provide WAN federation?

The last announcement for Nomad was WAN Federation so there has been a lot of improvement from the pure product sets standpoint but there will also be a few exciting announcements in a couple of months’ time.

Overall, the vision for us is to make sure from a DevOps perspective and a developer standpoint that we continue to help developers successfully scale and launch applications at a rapid pace. And all of these layers matter in that journey.

Fig. 4: How Vault works

So, the vision to provide developers with all of the automation they need, to provide them the infrastructure and background tools they need and to just enable them to have the tools to hand at their disposal, right?

Correct. And not lock them down to a specific set of cloud opportunities or platform and so on – give them flexibility.

The major aspect there is again perhaps the avoidance of vendor locks and platform locks, so HashiCorp is an important enabler in regard of openness and for community driven aspects.

That’s absolutely correct.

Regarding this, you mentioned in the beginning that you offer Terraform as an open source version and a commercial version. What are the differences and why is open source important for you?

The heart and soul of our company is open source and what drives us every day is the open source community which not only provides us feedback but also contributes to our goal. That is who we are and that will never change. The enterprise aspect of it is based on enterprises adopting the open source solution.

To be clear, the open source solution at the platform layer is exactly the same in terms of core capabilities as the enterprise version. Where the enterprise capabilities kick in is when large organizations need collaboration capabilities because otherwise 10 developers are doing the same thing. Why waste their time when you can actually collaborate on something that’s best practice and has already been created?

The second enterprise capability is the compliance aspect where there should be an amount of rule-based access control around who’s using what, how they use it, how they are implementing it.

The other aspects of enterprises are deeper auditing across the company or larger visualization in terms of dashboards. Things you would think of that maybe an open source practitioner does not really care about but a large organization from an IT perspective or a CIO would care about in terms of seeing how everything is implemented across the board and what is happening at the macro level or at the top level that’s affecting the overall throughput of the company.

A practitioner might not care much about cost estimation, but an individual IT organization will have a budget on how much can be spent in the cloud. Terraform cost estimation helps control and ties back to the budget, helping define how much infrastructure automation can be done and put limits on what can be implemented.

These kinds of things extend the core capabilities into the enterprise but the core focus for us is always the developers and practitioners.

That is awesome specifically the kind of governance aspects you mentioned as well as the integration into the enterprise ecosystem. Is there anything that you would like our readers to take with them, what should they know in regard to HashiCorp and your products?

The one thing I want to say is that HashiCorp, at its heart and soul, is built by developers. The two founders Mitchell and Armon are developers who still write code and build products today. They understand for the most part what is happening out there, what the challenges are. And you will see that reflected in our products through and through.

We continue to welcome feedback – whether it’s good or bad. We prefer the good of course, but still take on the bad, because that helps us to improve our product lines. We will always be there as a support set for all of our practitioners and developers.

The four products that we have plus our two other open source solutions – Vagrant and Packer – are instrumental in our minds in terms of helping drive cloud adoption at scale for developers and organizations across the board.

It is intentional that every release and every product update that we come out with ensures that automation continues to make things easier for the developer. This means that they can focus on what is most important to them – making sure they build the right application set to drive the business and their organization forward.

We will be in the background, helping them achieve their goals. We will continue to take their feedback on board and look at implementing it in our products.

That is awesome. As a developer I can totally identify with what you said and will happily take that to our readers. Thank you for all those insights and thank you for the great products you are providing the community.

Absolutely. If your readers want to learn more, we have: learn.hashicorp.com. Here there is everything needed to get started on HashiCorp including training videos and coaching on how to use the products. We put a lot of time into helping the community learn how to use the products so that they can get the most out of them.

We will spread the word. And thank you very much for your time!

Thank you for having me.

To watch the interview, look here: https://youtu.be/MLFjYKU1mp4

The interview was conducted by Karsten Samaschke and Friederike Zelke.

About Amith Nair

Amith Nair is currently VP of Product Marketing at HashiCorp where he is responsible for overall positioning and messaging of the HashiCorp portfolio of products. He has held similar product marketing and product management leadership positions, most recently at Microsoft, where he led product marketing and operations for Microsoft 365. He has a Master of Science degree from the University of Colorado, Boulder.

Amith Nair is currently VP of Product Marketing at HashiCorp where he is responsible for overall positioning and messaging of the HashiCorp portfolio of products. He has held similar product marketing and product management leadership positions, most recently at Microsoft, where he led product marketing and operations for Microsoft 365. He has a Master of Science degree from the University of Colorado, Boulder.