The topic of security can often be a difficult discussion at many companies. There are many competing demands to satisfy all at the same time. How do we increase developer productivity, satisfy regulatory requirements, ensure production stability, and detect and remediate threats in real time? The answer won’t be simple, but because of advances in cloud-based technology there are some thought processes that are quickly becoming outmoded.

Tickets, and Policies, and InfoSec Reviews…oh my!

In the golden era of ITIL based change management at companies, one fairly important department which had to participate at all levels of discussion was the IT Risk or InfoSec team. From architecture, to implementation review, to production incident response, they were involved at every step in the software development and deployment lifecycle. As new methodologies such as DevOps and cloud-native development principles began to take hold and new threats began to emerge from everywhere all the time, these departments became overtaxed and were unable to fulfil their fundamental mission: protect and manage organizational risk as it pertains to digital information assets. So, the question becomes, will these organizations evolve with the new threat landscape and new development landscape? Or will they adapt to embrace a fundamental shift towards change as a constant and learn to manage risk inside of it?

What do we need to protect?

For companies to be able to protect and manage risk of their digital assets, they need to consider new ways of automating our response to threats and begin to bake security into every aspect of the products and tools they ship to customers. But before we understand the ways we can protect our products, we need to understand our threat plane. For the rest of this article I will be focusing on securing workloads using cloud–native tools such as Kubernetes and containers, but the principles found in this section should apply regardless of context. I want to give you this context, because I believe that by simply staring at security products and their features without context on the benefits that they may provide could cause holes in the overall security landscape of a company. By taking the time to get this intuition, I believe you will be better prepared to assess threats and respond to them accordingly.

I like to categorize threats generally into two main areas: those which impact the runtime of a particular process and those which impact the communication between two processes. Put more concretely I divide security into Networking and Computation. There are threats which exist outside of this such as social engineering, but these are more difficult to present tools on automated response for and thus will be out of scope for this article. The vast majority of threats that I see on a daily basis I would categorize into network-level threats. That is, an attacker must make use of a network connection to carry out their intended malice on the system.

Towards an automated response

So then to keep up with change we need automated responses to threats both internal and external in these two areas. To do this well, we need to use automated tooling that allows IT security teams to dictate policy, enforce it programmatically, spot and remediate exceptions, and help educate engineers to build security in at the beginning of the software development lifecycle. The rest of this article will be exploring areas where we need security in a cloud–native landscape and will make some broad security suggestions as well as offer advice for how to enforce security programmatically.

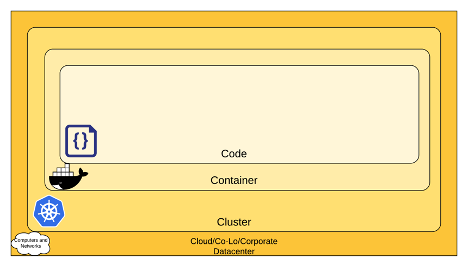

Fig. 1: The 4 C´s of cloud-native security

The 4 C’s of Cloud–Native Security

A while back while doing some threat modeling for a company I used to work for I began to appreciate just how interconnected each of the tools and services we leveraged were. Because of this I was having a difficult time organizing my responses to threats and at what tier of our stack I should be responding to them. So, thinking like a software engineer I began to try to modularize my approach to compute and network security in a new cloud–native world, and I came up with the 4 C’s of Cloud–Native Security (fig. 1).

This model of security follows a popular thought framework in the software/network security space known as “Defense in Depth”. That is that we have multiple different perimeter defenses that overlap and help us to organize our response to threats. The 4C framework was helpful for me to be able to visualize the different areas of concern I would have. If it’s not helpful to you, don’t worry…it’s just a mental model. This picture is organized as a hierarchy of different areas we must concern ourselves with network/compute security. For example: if your Kubernetes, Nomad, DC/OS, Mesos, or other orchestrator cluster is fundamentally compromised, it doesn’t matter how secure your containers or code are, you are already starting from a compromised position. Thus, in this picture, the Cloud/Co-Lo/or Corporate Datacenter security forms the trusted computing base for a Cluster. And the cluster forms another trusted computing base for the container. And the container forms a trusted computing base for the application code running. In the following sections, I will be referencing some open source tools to help organize a holistic approach using the above model to Cloud–Native Security. Note that much of this and more can be found on https://kubernetes.io. Those guys are awesome and do great work!

Cloud

While it is impossible to make specific recommendations because each cloud provider is different, the key to ensuring that your cloud is a trusted computing base for your cluster is to understand the cloud operating model of “shared responsibility.” For example, my current favorite cloud provider AWS has extensive documentation to indicate that they are responsible for security OF the cloud… but you are responsible for securing your workloads IN their cloud. So how might we do that? There are a bunch of tools, but let’s use our thought model from earlier which categorized threats into compute and network level. Examples of how to handle each in AWS:

Compute

- Leverage the “at-rest” encryption that each service provides for your data. An example is enabling S3 SSE encryption on a bucket or encrypting an RDS instance with a KMS key

- Ensure that operating systems are always patched and up to date using the package manager of that operating system

- Subscribe to CVE feeds that let you know if something you have in production is vulnerable. If you are unfamiliar with CVE’s, these are Common Vulnerabilities and Exposures and there is an international database that tracks high-profile software bugs so that you can remediate them

- Use services like AWS Config to ensure that while you allow your developers to create new AWS resources and increase their velocity, you know the second something is out of compliance and can even automate your responses to it

Network

- Leverage AWS VPC’s and VPNs/VPC Peering/VPC endpoints to securely and privately communicate with your applications and AWS services from your applications

- Use Security Groups that are extremely locked down so that no traffic is communicating unnecessarily

- Leverage VPC Flow logging to get packet level inspection of traffic

- Use AWS GuardDuty to allow AWS to classify threats based on its own intelligence algorithms to help you organize your response

- Use tools such as WAF and AWS Shield to protect endpoints from commonly known attacks

- These can also be enforced programmatically using AWS Config

Cluster

This article will focus on Kubernetes as the orchestrator of choice, but there are many out there and will likely have similar security concerns to what we’ll discuss here. Kubernetes security is a dense topic, and I highly recommend additional reading in the Kubernetes open source documentation for examples and walkthroughs of what to do. But here are some things to consider for Kubernetes:

RBAC

Newer versions of Kubernetes use a form of API security called role-based access control. By leveraging ClusterRoles/Roles and ClusterRoleBindings/RoleBindings, cluster operators are able to control access to manipulate resources in Kubernetes. Much in the same way you would want to be careful about what access you give in AWS IAM, you will want to be similarly cautious in Kubernetes.

PodSecurityPolicies

PodSecurityPolicies allow you to dictate how a Pod is allowed to run on a Node. This is helpful in case you want to enforce that Pods cannot run as a root user in Linux or that they cannot map a particular hostPath. By utilizing these, Cluster Operators can have confidence that Kubernetes will only schedule and start a Pod which complies with these policies.

NetworkPolicies

Depending on the CNI that your cluster uses, you may have the ability to apply NetworkPolicies to your cluster. These operate much in the same way that AWS Security Groups do and can be very helpful to restrict access between Pods inside of your cluster that should communicate only under specific conditions. An example of this is that you might not want your marketing website Pod talking directly to your payments database Pod. NetworkPolicy objects help us enforce this.

Quotas

While less sophisticated, never underestimate a Denial of Service attack to disrupt the normal flow of information to legitimate users. It can also impact the stability of your system. If your Deployment objects are already using resources: blocks then you don’t need to worry. What can happen here without them is that Kubernetes assignes a QoS class of “BestEffort” to each of the Pods. And if one is currently undergoing an attack it can expand and start to cause disruption to other Pods on the cluster. By utilizing Kubernetes Quotas, you can ensure that this won’t happen.

Secret management

Kubernetes secrets have come a long way since the beginning of the cluster, but there are still concerns about their encryption at rest. If you are not already encrypting your etcd volumes at rest in your cloud provider, then you should consider using an EncryptionProvider to ensure that secrets are secure at rest and only decrypted when a Pod needs them.

Audit Logging

Kubernetes Audit logging is a way to get a transcript of every action taken on a cluster. This is important to be able to perform forensic analysis after an attack was carried out, or to understand if there are malicious bad actors performing tasks in your cluster that should not be.

TLS

The Kubernetes API server requires TLS to communicate with it, but your apps may not. You can leverage the TLS encryption of Kubernetes ingress objects to ensure that your traffic is coming into the cluster encrypted. If you want to ensure that all communication between all Pods is encrypted, then you should consider using a Service Mesh tool such as Istio or Linkerd.

Container

Container security is roughly the same as considering VM security since they are now in effect micro VM’s running on your VMs in the cloud. This section will not speak about how to secure the container runtime, but rather the constructed containers themselves. Here in the container the name of the game in security is vulnerability, binary, and capability management. Basically, what processes should your application be allowed to invoke and what dependencies in the operating system does it have? By diagramming these dependencies you can create a way of staying up to date with vulnerabilities and ensure that even if your container is compromised that only the required binaries to run your application exist in the container, and no sensitive capabilities are able to be performed by those processes. There are many scanning tools such as CoreOS’s Clair that help you scan and understand the vulnerabilities of your containers. One more thing to note, just because a container passes a scan in your pipeline, that doesn’t mean it didn’t have a vulnerability announced against it in prod. Perform regular checks of the dependencies in your container and stay up to date with vulnerabilities as they are announced.

Additionally, consider leveraging a tool such as Notary to ensure that software updates to your application are secure. This is a signing and verification procedure, which can ensure that you are always deploying the version of a container that you intended to by leveraging multiple keys in a chain of custody. More can be found by Googling “Docker content trust”.

Code

Just as in the container, we cannot recommend secure coding standards because there are too many languages and libraries to consider when it comes to code security. If you are already using a type-safe, statically compiled language then you are moving in the right direction. Interpreted languages are more vulnerable to attack because the source code must live in the production environment allowing advanced persistent threats from attackers to modify source code to do malicious things such as data exfiltration. Just as in the container section, a modern management of 3rd party library dependencies and vulnerabilities is an absolute must to ensure that you are not shipping any obvious vulnerabilities into a production like environment. There are many open source and commercial tools to achieve this and they vary by environment.

Wrapping it up in a pipeline

We’ve discussed a thought framework of how to think about cloud-native security and for how to organize a response to various areas of threats, and we’ve talked about how to automate policy such that IT security teams are able to ensure compliance without having to reduce developer velocity. In closing I’d like to make a recommendation for additional reading on how to tie this all together in a DevOps pipeline fashion. The article is by a good friend of mine Austin Adams and it’s worth checking out. https://thenewstack.io/beyond-ci-cd-how-continuous-hacking-of-docker-containers-and-pipeline-driven-security-keeps-ygrene-secure/

Thanks again for your time. Good luck and stay secure!

Zach Arnold works as an engineer for a financial services company in the Bay Area of California. He is currently studying to get his Masters in Computer Science with a concentration in Machine Learning from Georgia Tech. His current passion and hobby has been learning from and working with members of the Kubernetes open source community. One recent development that has resulted from that has been his opportunity to participate in writing a book on Kubernetes. https://www.packtpub.com/cloud-networking/the-kubernetes-workshop

Contact: mobile: +1-843-637-1251, email: me@zacharnold.org, and LinkedIn: https://www.linkedin.com/in/zparnold/